District-level administrators carefully analyze their own performance data, practicing what they preach to local educators.

A little more than a decade ago, the Harvard Graduate School of Education and the Boston Public Schools joined forces to create the Data Wise Improvement Process, a step-by-step approach to analyzing and using data to improve schools. It was intended to help teachers work collaboratively to improve their classroom instruction. Increasingly, though, district-level administrators also have begun to rely on Data Wise, as they’ve learned that this sort of collaborative, data-driven inquiry can help develop a systemwide culture of improvement (Coburn, Touré, & Yamashita, 2009; Park, Daly, & Guerra, 2013).

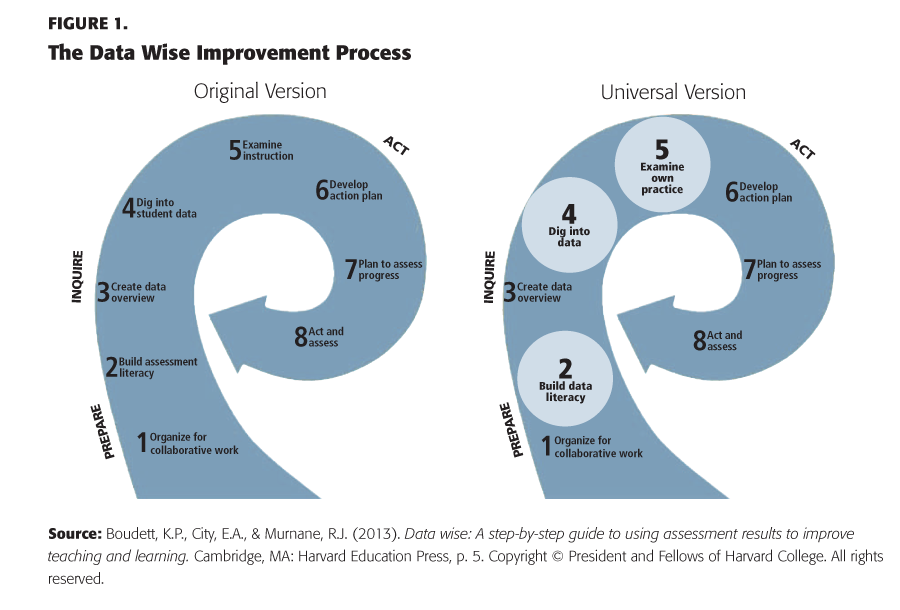

The original Data Wise Improvement Process (see Fig. 1, left side) was designed for school-based teams where the people providing services are teachers and the people being served (the “learners”) are students. But when district leaders in Prince George’s County, Maryland, began using Data Wise across their school system, they identified the need for the “universal” Data Wise Improvement Process (Fig. 1, right side), which is meant for any team — such as a group of central office administrators — that works collaboratively to use data for improvement.

In some ways, the two versions are identical:

- Teams begin by laying a foundation for engaging in collaborative work — the Prepare phase.

- They identify the specific challenges facing the learners they serve, and they examine the efficacy of their own practices — the Inquire phase.

- They adopt practices most likely to address the challenges at hand — the Act phase.

However, the two versions of Data Wise differ in a few ways, too. Where the original process focuses mainly on student performance, the universal version assumes a broader interest in the performance of the whole school system — thus, Build Assessment Literacy becomes Build Data Literacy in the universal version, Dig into Student Data is broadened to Dig Into Data, and Examine Instruction becomes Examine Own Practice.

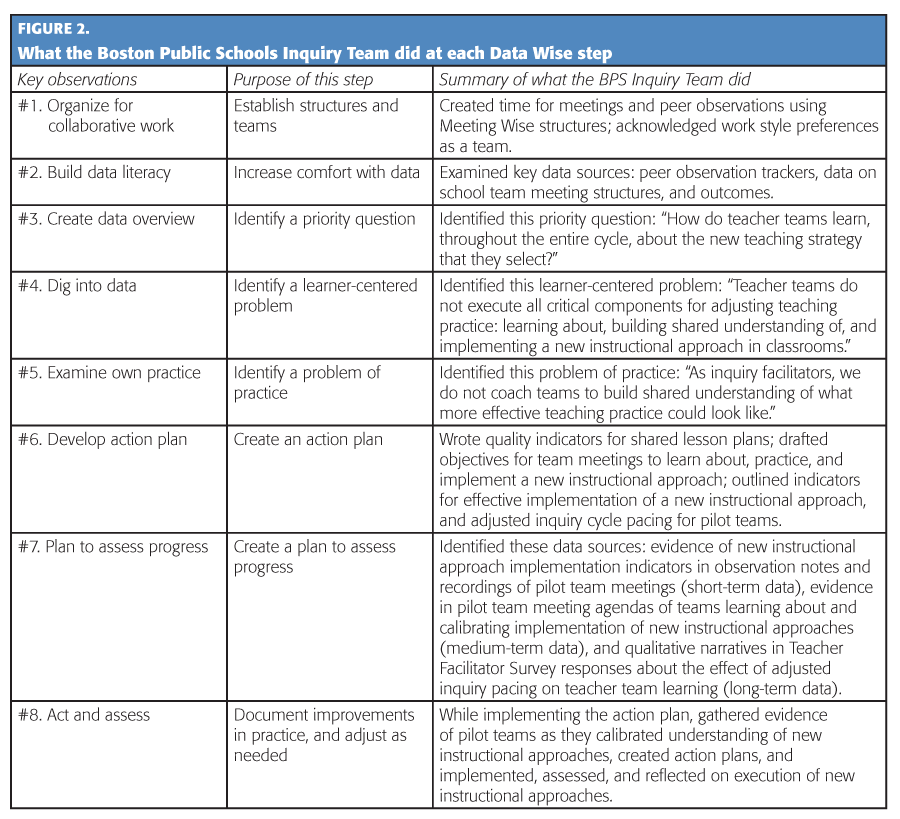

In the Boston Public Schools, the universal Data Wise Improvement Process enabled the six members of an Inquiry Team — all of them central office administrators from BPS — to improve their practice of supporting teachers for instructional improvement. Altogether, these six administrators are responsible for helping roughly 100 teacher teams in 30 Boston schools as they use the Data Wise process to improve classroom instruction. By engaging in their own collaborative inquiry cycle, Inquiry Team members hoped to become more effective coaches, and they hoped to identify ways to strengthen the instructional core across the schools they support. Below, we describe the specific steps they took. (For a brief overview of these steps, see Fig. 2.)

Step #1: Organize for collaborative work.

On any team, making time for collaborative work and setting clear expectations for effective participation is crucial. To organize its time together, particularly its weekly meetings, the BPS Inquiry Team used guidelines and protocols (described at length in Boudett & City, 2014) that, for example, require team members to rotate, from one meeting to the next, among the key roles of timekeeper, note taker, and — most important — facilitator. The facilitator ensures that the agenda has clear objectives, time limits for each objective, and an opportunity at the end of the meeting to discuss what worked well and how the team could improve the next meeting.

Step #2: Build data literacy.

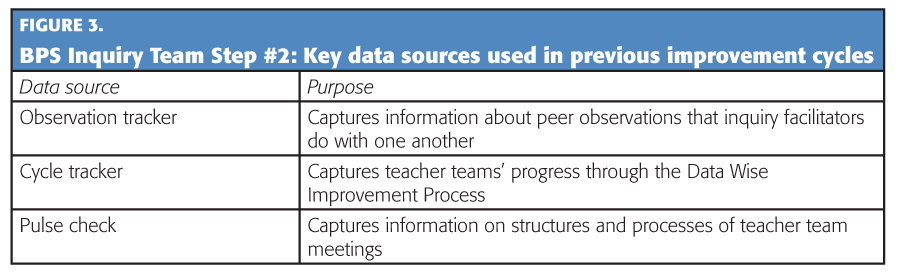

At the beginning of its Data Wise process, the three veteran members of the BPS team introduced the three new members to data tools they had created and which they used to assess their own performance and calibrate their work with local teachers (See Fig. 3). The team used the “I notice, I wonder” protocol to dive more deeply into their data sources. In this protocol, participants share low-inference statements about what they notice in a data source, then ask questions that the data source surfaces for them. Once team members shared key noticings and wonderings about the data sources they used as a team, they were ready for the Inquire phase.

Step #3: Create data overview.

In this step, the team defined the specific question that would guide its work as it moved through the Inquire stage. Before moving into the Inquire phase, however, teams first have to decide precisely whose learning they want to address, what the focus area for improvement should be, and what data sources will be useful.

School-based teacher teams can assume, right off the bat, that the point of their inquiry is to improve student learning and that they should begin by reviewing student-level data, looking for performance trends that seem particularly striking or troubling and that might suggest an important question to explore further. But when the team consists of system-level administrators, there’s no reason to assume the Inquiry process will focus on student learning or that it will be useful to look at student-level data. For example, a team from the district’s transportation department might explore issues relevant to the work of bus drivers, while community engagement liaisons might choose to address the needs of parents. For the BPS Inquiry Team, the goal was to inquire into what was being learned by the school-based teacher teams that they coached, using records and information collected during their work with those teacher leaders.

The challenge is to narrow the scope of inquiry down to a manageable size, focusing in on a topic that will be realistic and useful for the team to address in the given time frame.

At this point in the Data Wise process, the challenge is to narrow the scope of inquiry to a manageable size, focusing on a realistic and useful topic that the team can address in the given time frame. (An Inquiry Team from the district transportation department, for instance, might focus on bus drivers’ safety training, while community engagement staff might explore outreach to parents of English learners.) Further, the topic should relate to the actual needs of the individuals to be served, and it should be a topic that can be examined closely using a range of data sources. For its part, the BPS Inquiry Team decided to focus its Inquiry cycle on implementing new instructional approaches. In recent school visits, team members had noted a lot of variation in the quality and execution of new instructional approaches chosen by school-based teams, and they agreed that it would be an important topic to investigate further.

The BPS team members then reviewed various data from school-based teacher teams, including notes and records about new instructional approaches. They saw that teachers were spending significant meeting time analyzing data about current teaching practices (for example, they were looking closely at lesson plans, student work, and classroom assessments), but they weren’t spending much time learning about new approaches they were considering. Taking these observations into account, the BPS team then came up with a list of questions they might use to guide their inquiry before settling on one that seemed particularly important: “How do the school teams learn, throughout the Inquiry cycle, about the new teaching strategy that they select?”

Step #4: Dig into data.

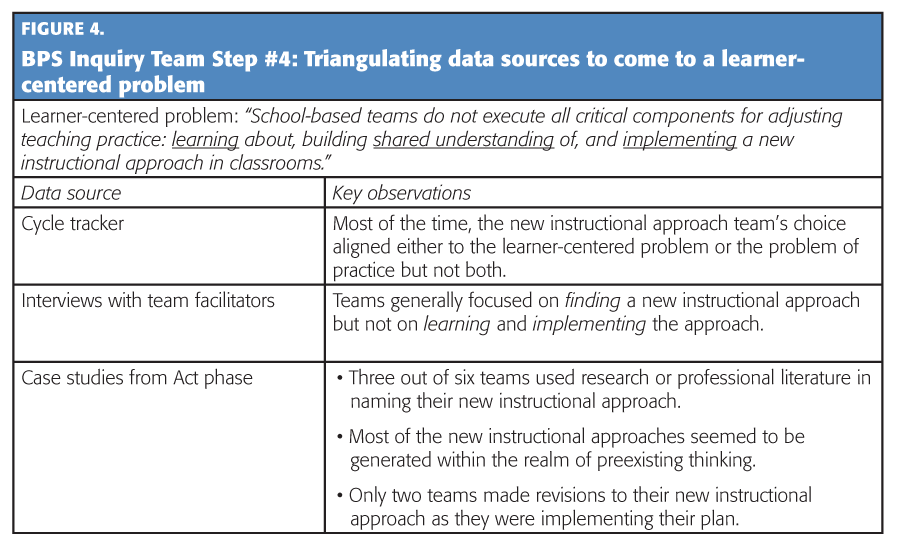

Armed with their priority question, the BPS team began to dig deeper into the data they had collected (including interview notes, case studies, and planning documents; see Fig. 4) so they could identify a specific, learner-centered problem to address. When they reviewed records from the cycle tracker (one of the data sources they had created on which they kept track of each team’s progress through the Data Wise Improvement Process), they found that teacher teams tended to choose new instructional approaches that promised to solve either a problem that faced their students, or one that faced their teachers, but not both. When they looked closely at the interview notes, they found that their initial review of the data had only exposed the tip of the iceberg: Not only had many teacher leaders chosen instructional approaches without spending much time learning about them, but they spent even less time learning how to use them in the classroom. Further, the case studies revealed that when they tried to implement new instructional approaches, they tended to assimilate them into their existing beliefs and practices, without expanding beyond their familiar teaching repertoires and without allocating the time and resources to make meaningful changes.

The BPS Inquiry Team had found its problem for further inquiry: “School-based teams do not execute all critical components for adjusting teaching practice: learning about, building shared understanding of, and implementing a new instructional approach in classrooms.”

Step #5: Examine own practice.

In Step #5, members of the BPS Inquiry Team turned the mirror on their own practice by analyzing a wide range of materials they had used to support the school-based teacher teams, including questionnaires, documents, and other artifacts from the various professional development sessions and coaching activities they had led. They also observed five meetings — a combination of teacher team meetings and one-on-one coaching conversations between a BPS Inquiry Facilitator and a teacher team facilitator.

Upon reviewing the artifacts and observation transcripts, the BPS team members realized that they had neglected to give teacher teams clear and consistent definitions of strong instructional practice. Nor had they shown them vivid examples and illustrations of such practice or described the kinds of dramatic changes that a new approach to teaching might entail. After debating how best to define this problem or to express what was missing from their own work as instructional coaches, they settled on the following statement: “As inquiry facilitators, we do not coach teams to build a shared understanding of what more effective teaching practice could look like.”

Step #6: Develop action plan.

As the BPS Inquiry Team moved into the Act phase of the Data Wise process, their next task was to decide precisely what they would do to address their problem of practice.

Rather than rushing to come up with a strategy, though, team members took a deliberate approach: They began by reading an article about adult learning, which helped them conceptualize why their problem of practice had emerged in the first place. Then they used a recommended protocol to dig more deeply into their problem so they would be primed to develop well-informed strategies to solve it. They then used a brainstorming protocol to generate possible actions to solve their problem of practice, and they voted on which of them to include in their action plan.

They decided upon a two-part strategy: First, they would revise their pacing calendar to give a group of 15 pilot teacher teams more time to learn about and implement their new instructional approaches. Instead of completing three Inquiry cycles in an academic year as originally planned, the pilot teams would complete two cycles per year, with extra time devoted to the Act stage of the process. Second, the BPS team planned to create materials that would provide a structure and supports for this additional time. For example, they drafted sample meeting agendas that school-based teams could use to learn about, practice, and guide the implementation of new instructional approaches. Also, they created a menu of recommended instructional strategies, along with resources introducing those techniques and illustrating their use. In effect, the BPS team created a toolkit that school teams could use to guide themselves through the Act phase of the Data Wise process when they weren’t available.

Step #7: Plan to assess progress.

Next, facilitators set goals for their “learners” and chose assessments to measure their progress. The BPS team decided its goal for all 15 pilot teams would be to use their extra time (during the Act phase of the process, or Steps #6-#8) to learn about a new instructional strategy, put it into practice, make adjustments, and measure their progress toward effective practice. To measure their own progress, in the short term, the BPS team members decided to analyze transcripts and audio recordings of pilot team meetings, looking for evidence that they were, in fact, allocating meeting time to learn about and implement new instructional approaches. In the medium term, their own pulse check data would measure progress. And in the long term, they would survey local teacher leaders, capturing their beliefs about the value of having extra time and support to learn about and implement new teaching strategies.

Step #8: Act and assess.

The final step in the Data Wise Improvement Process is to implement the plans defined in Steps #6 and #7. In this case, the BPS team selected the 15 teacher teams to pilot the new approach, then designed and facilitated a pair of professional development workshops for them and other school-based teams. In the first workshop, they offered practical tips on how to learn about and implement new instructional strategies; in the second, they introduced teachers to a protocol that they had developed, inspired by the book Practice Perfect (Lemov, Woolway, & Yezzi, 2012), which outlines rules for engaging in deliberate, performance-changing practice. Then, using this protocol, they guided teacher teams through an exercise that illustrated one of the instructional strategies they had identified earlier.

Further, the BPS team acted on the first part of their assessment plan: They recorded and transcribed discussions from pilot team meetings, and they analyzed them to see if the teacher teams were in fact devoting significant amounts of time to learning about and choosing new instructional strategies. Happily, they found that this was the case. According to the evidence, the pilot teams were investigating new instructional approaches, calibrating their understanding of what those approaches would look like in practice, implementing them, assessing their impact, and reflecting on changes and adjustments that should be made.

Recognizing the importance of giving teachers more time to learn deeply about new instructional approaches before adopting and implementing them, the BPS Inquiry Team now offers school-based teams the option to implement two or three cycles per year, and all teams now devote more time to the final steps of the process (the Act phase).

Not only does this process offer a powerful lever for improving practice at both the school and system levels, but system-level team members also can “walk the talk” by doing the very same kind of work that they ask school-based team members to do. In other words, BPS team members are not asking the people they serve to do anything they are not doing themselves.

References

Boudett, K.P. & City, E.A. (2014). Meeting wise: Making the most of collaborative time for educators. Cambridge, MA: Harvard Education Press.

Boudett, K.P., City, E.A., & Murnane, R.J. (Eds.). (2013). Data wise: A step-by-step guide to using assessment results to improve teaching and learning. Cambridge, MA: Harvard Education Press.

Coburn, C.E., Touré, J., & Yamashita, M. (2009). Evidence, interpretation, and persuasion: Instructional decision making at the district central office. Teachers College Record, 111 (4), 1115-1161.

Lemov, D., Woolway, E., & Yezzi, K. (2012). Practice perfect: 42 rules for getting better at getting better. Hoboken, NJ: John Wiley & Sons.

Park, V., Daly, A.J., & Guerra, A.W. (2013). Strategic framing: How leaders craft the meaning of data use for equity and learning. Educational Policy, 27 (4), 645-675.

R&D appears in each issue of Kappan with the assistance of the Deans Alliance, which is composed of the deans of the education schools/colleges at the following universities: George Washington University, Harvard University, Michigan State University, Northwestern University, Stanford University, Teachers College Columbia University, University of California, Berkeley, University of California, Los Angeles, University of Colorado, University of Michigan, University of Pennsylvania, and University of Wisconsin.

Originally published in September 2017 Phi Delta Kappan 99 (1), 25-30.

© 2017 Phi Delta Kappa International. All rights reserved.

ABOUT THE AUTHORS

Kathryn Parker Boudett

KATHRYN PARKER BOUDETT is a lecturer in education and director of the Data Wise Project, Harvard Graduate School of Education, Cambridge, Mass.

Mary Dillman

MARY DILLMAN is deputy executive director of data and accountability, Boston Public Schools, Boston, Mass., and a certified Data Wise coach.

Meghan Lockwood

MEGHAN LOCKWOOD is a certified Data Wise coach.