Student performance on standardized tests reflects more than just mastery of the material.

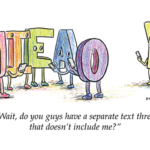

This is one of my favorite jokes:

Teacher: What’s the difference between ignorance and apathy?

Student: I don’t know, and I don’t care!

I especially like the joke because it touches on a question I’ve been considering for 15 years: When a student gets a test item wrong, is it because they didn’t know the answer (ignorance) or is it because they didn’t care about identifying the correct answer (apathy)?

When we give a test, we expect the student’s answers to reflect what they know and can do. That is, we assume the student is engaged in taking the test. But the reality is that students do sometimes disengage during tests. And whenever students show substantial disengagement, the resulting scores tend to be lower than the scores they would have received had they been fully engaged. This makes score interpretation more challenging, because it can be difficult to discern the degree to which a test score reflects a student’s test-taking effort rather than their true achievement level.

How can we detect disengagement? In computer-based tests, the time students spend on test items provides valuable information. When students become disengaged, they tend to exhibit rapid-guessing behavior, answering an item in much less time than needed to read it, solve it, and select an answer. The identification of rapid guesses allows us to unobtrusively detect disengagement down to the level of individual items. This provides a powerful, objective method for assessing a student’s engagement during a test.

Research on rapid guessing (Wise, 2017; Wise & Kuhfeld, 2019) has taught us about the dynamics of rapid guessing and how its occurrence is influenced by characteristics of the items, the students, and the context in which testing occurs. We’ve learned a lot about the degree to which disengagement distorts scores, and about how to adjust scores accordingly. And, perhaps most important, we’ve developed innovative computer-based tests that can detect rapid guessing and curtail its occurrence by notifying test proctors or messaging the student directly.

What does this mean for educators and policy makers?

It’s not exactly breaking news that some students become disengaged during testing, but it’s important for educators to know about these new methods for detecting and managing disengagement. Given the ability to identify rapid guessing, we can become much more confident in our ability to distinguish between poor mastery of the material and low engagement in the test. Further, these research advances invite us to identify testing practices that are more engaging and that minimize rapid guessing.

Most important, the more we understand about test disengagement, the more we’re forced to acknowledge that not all test scores are trustworthy — and that we may need to rethink the inferences we commonly make about achievement gaps, statewide proficiency rates, and teacher performance.

Test performance is a function of both achievement and engagement. The good news is that most students do show real engagement on tests, even when they perceive no personal consequences for their performance. For these students, we should be confident in their test results. However, disengaged test taking occurs too often to ignore, and some test scores shouldn’t be trusted.

Although disengagement will continue to be present in our test data, we now have powerful new ways to detect its presence and are developing effective methods for managing its effects. These tools should become part of all computer-based achievement testing programs. Professionally, we have a responsibility to be neither ignorant of these tools nor apathetic about their use.

References

Wise, S.L. (2017). Rapid-guessing behavior: Its identification, interpretations, and implications. Educational Measurement: Issues and Practice, 36 (4), 52-61.

Wise, S.L. & Kuhfeld, M. (2019). What happens when test takers disengage? Understanding and addressing rapid guessing. (The Collaborative for Student Growth at NWEA Research Brief). Portland, OR: NWEA.

Citation: Wise, S.L. (2019, Oct. 28). The emerging science of test-taking disengagement. Phi Delta Kappan, 101 (3), 72.

ABOUT THE AUTHOR

Steven L. Wise

STEVEN L. WISE is a senior research fellow at Northwest Evaluation Association .